How AI Sees Your Brand (And Why It Matters)

AI recommendations are not objective. They are an amalgamation of biases baked into training data, weighted by whatever web sources the model retrieves at query time. The brand that AI perceives as the most eco-friendly might have almost no visibility. The brand it sees as time-tested and trustworthy might dominate recommendations. Unless you can see these perceptions, you cannot change them.

TL;DR

How AI perceives your brand and how often it recommends you are two different things. Sill's Semantic Map lets you visualize both — plotting brands on custom axes to reveal perception gaps no other GEO tool shows.

AI Recommendations Are Not Objective

When someone asks ChatGPT "What are the best gaming peripherals?" the response feels authoritative. It reads like a considered, neutral recommendation. It is not. Every AI response is shaped by two forces: the biases embedded in the model's training data, and the web sources it retrieves at query time through retrieval-augmented generation (RAG).

Training data reflects the internet as it existed at a point in time, with all its skews toward popular brands, English-language content, and sources that happened to be well-indexed. A study by Navigli et al. (2023) found that LLMs encode systematic biases from their training corpora, including demographic, cultural, and commercial biases that persist even after fine-tuning. When an AI model recommends Brand A over Brand B, that recommendation may have less to do with product quality and more to do with which brand had more Reddit threads, review site mentions, and Wikipedia coverage during the training data cutoff.

RAG adds a second layer. When web search is enabled, AI platforms retrieve and synthesize live web sources. But as research from the foundational GEO paper (Aggarwal et al., KDD 2024) demonstrated, the sources that get cited are not necessarily the most accurate. They are the ones that match what the model already "believes" from training, creating a confirmation loop. Content with statistics gets cited 30-40% more than content without, regardless of the underlying quality of the claims.

The result is that AI models build a perception of your brand that may be entirely disconnected from reality. And because GenAI chatbots are now the #1 source influencing B2B vendor shortlists, that disconnected perception is shaping real purchasing decisions.

The Perception-Visibility Gap

Here is what makes this problem particularly difficult: how AI perceives your brand and how visible your brand is in AI responses are two separate things. A model can have a clear, strong opinion about your brand and still almost never recommend you. Perception without visibility means the AI "knows" who you are but does not consider you relevant enough to surface.

An Ahrefs study of 75,000 brands found that the single strongest predictor of AI recommendation is how often a brand is mentioned across the web, with a correlation of 0.664. Domain authority, the metric traditional SEO was built around, showed a correlation of just 0.218. A brand can have perfect on-site SEO and still be invisible to AI.

This is why understanding AI perception is not optional. If you do not know how the model positions you, you cannot produce the right content, build the right off-site presence, or close the right gaps. You are optimizing blind.

Seeing Through the Model's Eyes

To illustrate what AI brand perception looks like in practice, let's walk through a real analysis from Sill's Semantic Map feature. The Semantic Map plots how AI models position brands against each other on dimensions you define. The axes are configurable, so you can test any hypothesis about how AI perceives your competitive landscape.

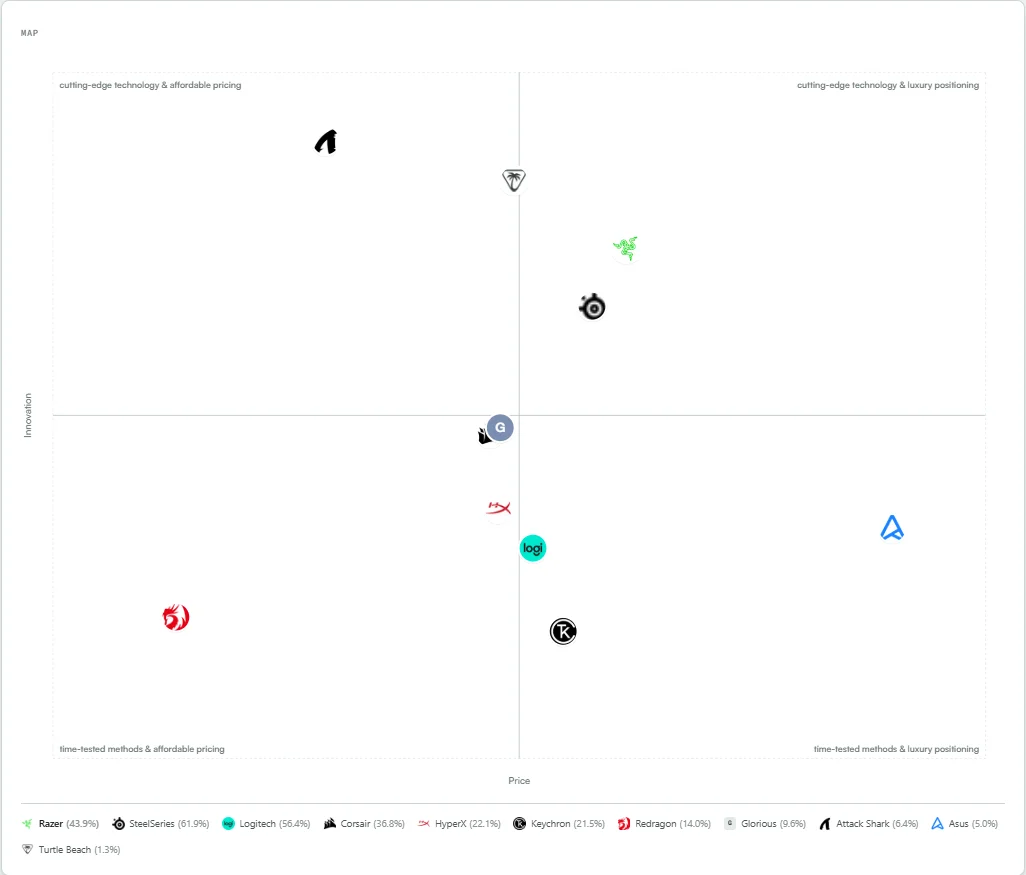

The example below is from the gaming peripherals market, tracking 11 brands across ChatGPT, Perplexity, Gemini, and Google AI Overviews. Each brand is plotted based on how AI models describe and compare them, and the percentage next to each name is their AI Share of Voice score.

Hover over any brand to see its visibility, sentiment, and position scores. The insights get interesting when you start changing the axes.

The first view plots Innovation against Price. A few things stand out immediately. Attack Shark, a relatively unknown brand with just 6.4% SOV, sits alone in the "cutting-edge technology & affordable pricing" quadrant. AI models see it as innovative and budget-friendly. That is a strong perceptual position, but almost nobody sees it because the visibility is so low.

Compare that with SteelSeries at 61.9% SOV. It sits near the center-right of the innovation axis, not at the extreme. AI does not see SteelSeries as the most innovative brand in the space. It sees it as something else, and we need to change the axes to understand what.

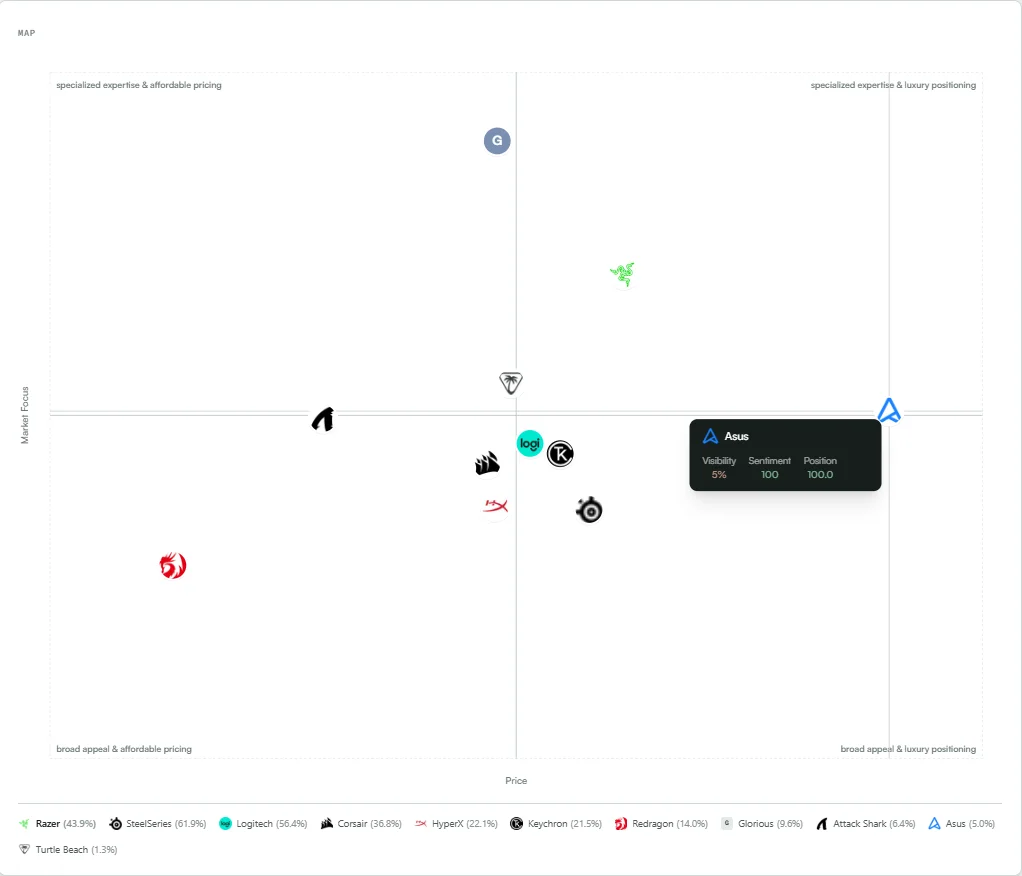

Switching to Market Focus vs. Price reveals a different pattern. Most brands cluster in the broad-appeal center. Razer pulls toward specialized expertise with luxury pricing. Glorious occupies the niche affordable space.

But the standout data point is Asus. Hover over it, and you see: 5% visibility, 100 sentiment, 100 position. When AI models mention Asus in the gaming peripherals context, they give it the highest possible praise and the primary recommendation slot. Every single time. But they almost never mention it. Asus has a 5% SOV, meaning it appears in roughly 1 out of every 20 AI responses.

This is the perception-visibility gap in its purest form. Asus has a perfect AI reputation in this category but almost zero AI presence. Without a tool that separates perception from visibility, a brand like Asus would never know that the AI already thinks highly of it. The problem is not reputation. The problem is that AI models do not associate Asus strongly enough with gaming peripherals to surface it. The fix is not better messaging; it is more off-site mentions in gaming peripheral contexts.

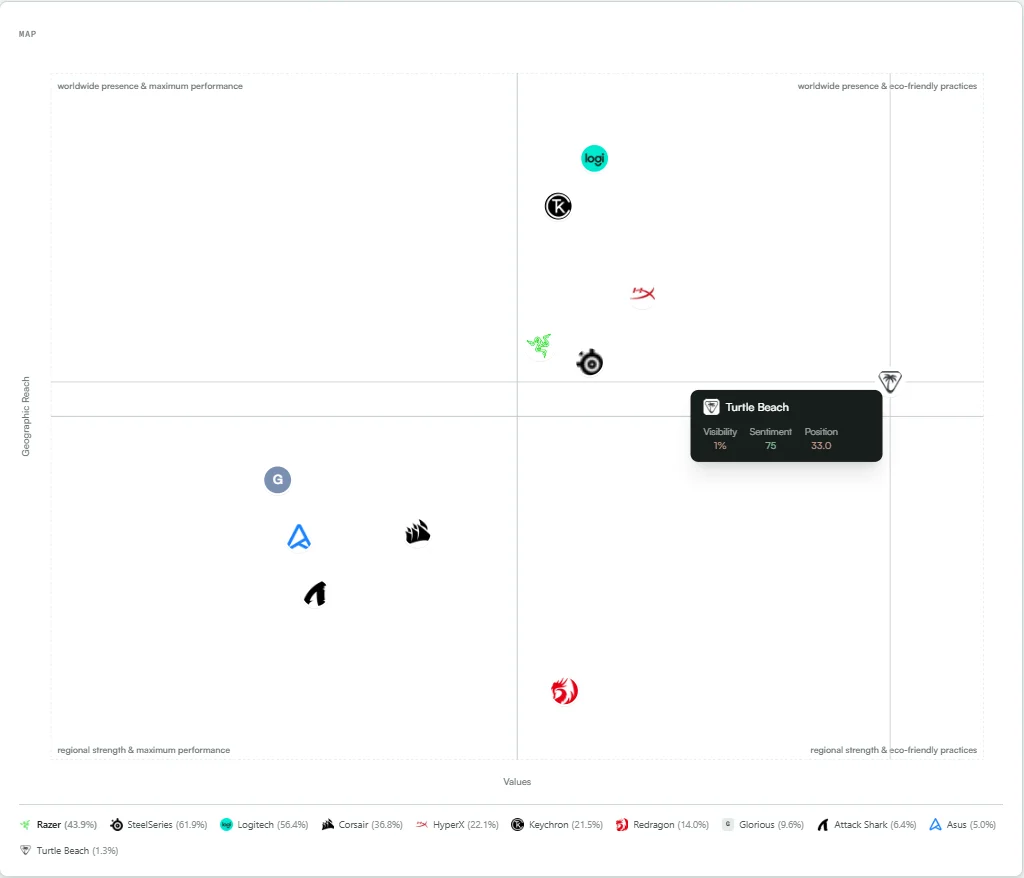

This is the most counterintuitive view. The axes are Geographic Reach vs. Values, ranging from "maximum performance" to "eco-friendly practices." Turtle Beach sits alone in the far right of the map. AI models see it as the brand with the strongest worldwide presence and eco-friendly positioning in the entire competitive set.

And yet Turtle Beach has 1.3% SOV. That is the lowest visibility score of any brand tracked. The tooltip confirms it: 1% visibility, 75 sentiment, 33 position. AI models have a clear perception of Turtle Beach, but when it comes to actually recommending gaming peripherals, they essentially never bring it up.

For Turtle Beach, this data tells a specific story. The AI knows who they are. It even has a positive sentiment about the brand. But the association with gaming peripherals is too weak for the model to surface it as a recommendation. Meanwhile, Logitech (56.4% SOV) and Keychron (21.5% SOV) own the "worldwide presence & maximum performance" quadrant, which is where the actual recommendation volume lives.

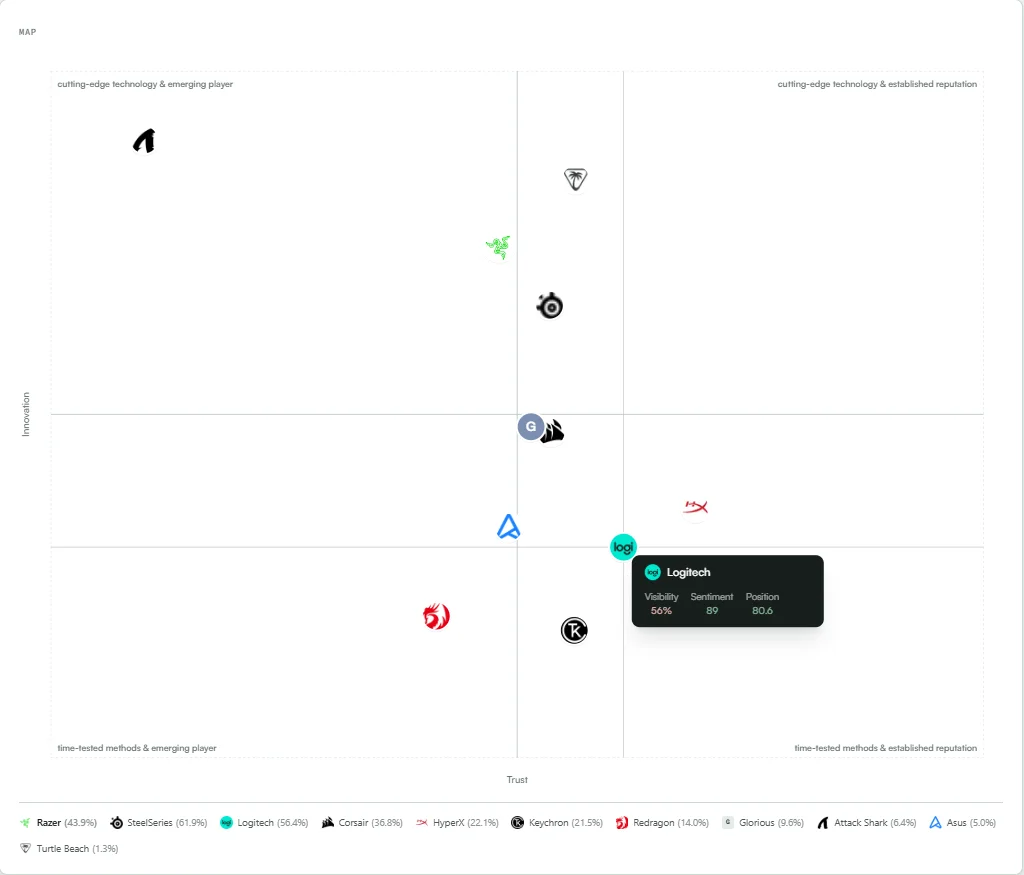

The final view, Innovation vs. Trust, answers the question we raised earlier about SteelSeries. Plot the brands on this axis and the pattern becomes clear. Logitech sits firmly in the "time-tested methods & established reputation" quadrant. Its tooltip: 56% visibility, 89 sentiment, 80.6 position.

Logitech is not seen as the most innovative brand. It is not positioned as cutting-edge. But AI models trust it. They see it as reliable, proven, and established. And that trust translates directly into recommendation frequency. When a buyer asks for gaming peripheral recommendations, AI models default to the brands they perceive as trustworthy, not the ones they perceive as innovative.

This finding aligns with broader research. A study by Wan et al. (ACL 2024, UC Berkeley) found that LLMs favor textual relevance and factual density over stylistic authority signals. Brands with long track records of web mentions, product reviews, and expert references accumulate exactly the kind of factual density that models reward. Innovation gets attention from humans. Trust gets recommendations from AI.

The Full Picture

When you combine perception data from the Semantic Map with visibility scores, the competitive landscape becomes legible. Here is the full breakdown of the 11 brands tracked in this analysis:

| Brand | AI Share of Voice | AI Perception |

|---|---|---|

| SteelSeries | 61.9% | Established, trusted, broad market presence |

| Logitech | 56.4% | Time-tested, reliable, mainstream positioning |

| Razer | 43.9% | Innovative, premium, specialized expertise |

| Corsair | 36.8% | Mid-range, broad appeal, performance-focused |

| HyperX | 22.1% | Performance-oriented, mid-range innovation |

| Keychron | 21.5% | Premium niche, worldwide reach, performance |

| Redragon | 14.0% | Budget, regional, value-oriented |

| Glorious | 9.6% | Specialized, affordable, enthusiast niche |

| Attack Shark | 6.4% | Cutting-edge, affordable, emerging player |

| Asus | 5.0% | Broad appeal, luxury positioning, perfect sentiment when mentioned |

| Turtle Beach | 1.3% | Eco-friendly, worldwide presence, but nearly invisible |

The pattern is consistent across every axis combination we tested. Brands that AI perceives as established, trusted, and mainstream dominate recommendations. Brands with distinctive positioning on innovation, sustainability, or specialization may have strong perceptions but weak visibility. Without the ability to see both dimensions, you cannot diagnose the problem or design the right fix.

Understanding Perception Drives the Right Strategy

The reason perception data matters is that it changes what you do next. Without it, every brand gets the same generic advice: "create more content, build more backlinks, get more reviews." With it, you get specific, actionable direction.

Take the three low-visibility brands from our analysis. Each has a different problem requiring a different fix:

- Asus (5% SOV, perfect sentiment): AI already loves the brand. The fix is not reputation. It is context association. Asus needs more web content explicitly connecting it to gaming peripherals, not gaming laptops or motherboards. More gaming peripheral roundup mentions, more review site presence in that specific category.

- Turtle Beach (1.3% SOV, eco-friendly perception): AI has a clear but wrong category association. Turtle Beach is known for gaming headsets, but the model associates it more with eco-friendliness than gaming performance. The fix is content that reframes the brand around its core gaming identity, backed by off-site mentions that reinforce that positioning.

- Attack Shark (6.4% SOV, innovative perception): AI sees innovation and affordability, but the brand lacks the web mention volume to break through. The fix is pure off-site presence: getting mentioned on Reddit, YouTube, review sites, and comparison articles. The perception is already right. The signal just needs to be louder.

None of these strategies would be obvious from a visibility score alone. You need to know both what the AI thinks and how often it says it.

A Feature No Other GEO Tool Offers

We surveyed every major AI visibility and GEO platform on the market. None of them offer a semantic positioning map with configurable axes. The entire category is focused on one dimension: visibility scores, mention counts, and citation tracking. That is necessary but not sufficient.

Here is what the leading platforms do and do not offer:

| Platform | SOV Tracking | Sentiment | Multi-Platform | Semantic Map |

|---|---|---|---|---|

| Otterly.AI | Yes | Yes | Yes | No |

| Profound | Yes | Yes | Yes | No |

| Peec AI | Yes | Yes | Yes | No |

| Scrunch AI | Yes | Yes | Yes | No |

| Sill | Yes | Yes | Yes | Yes |

Otterly.AI offers prompt monitoring, GEO audits, and citation gap analysis. It is a solid monitoring tool. But it shows you visibility numbers and keyword-level tracking, not how AI positions your brand relative to competitors on perception dimensions.

Profound (backed by Sequoia, $35M Series B) has a Conversation Explorer feature that surfaces real user AI prompts and an Agent Analytics dashboard. It is strong on the demand side, showing you what buyers ask. But it does not show you how AI models perceive your brand across configurable dimensions.

Peec AI ($21M Series A, 1,300+ brands) uses browser automation to capture real AI chat responses, avoiding the API-vs-chat gap. It tracks visibility, position, and sentiment over time. But its analysis stops at metrics. There is no way to explore how the model positions you on dimensions like innovation, trust, price perception, or market focus.

Scrunch AI monitors 8 AI platforms, offers persona-based prompt analytics, and has an Agent Experience Platform for optimizing content for AI crawlers. It covers the monitoring and optimization loop. But like every other tool in the space, it does not offer a semantic positioning map that lets you see where AI places your brand on custom perceptual axes.

Visibility Without Perception Is Half the Picture

The GEO industry is converging on a standard feature set: track visibility, measure sentiment, monitor multiple platforms. That baseline is necessary. But it only answers the question "how often does AI mention my brand?"

It does not answer the harder, more important questions:

- How does AI position my brand relative to competitors on dimensions that matter to buyers?

- Is there a gap between how I want to be perceived and how the model actually sees me?

- Which perceptual attributes correlate with high visibility in my category?

- What specific positioning shift would move me into the recommendation zone?

The Harvard Business Review's "Share of Model" framework argues that brands need to understand the internal representations that LLMs build about them. That is exactly what the Semantic Map provides: a direct window into how AI models have encoded your brand's positioning, updated daily with real data from real AI responses.

Only by understanding your positioning as AI sees it can you produce the content and build the presence needed to drive the positioning you want. Everything else is guesswork.

See How AI Sees You

The Semantic Map is available today in the Sill monitoring dashboard. Define the dimensions that matter for your market, and see exactly where AI places your brand. Combine it with daily SOV tracking, sentiment analysis, and competitor rankings to get the full picture of your AI visibility.

If you want to see where your brand stands before committing, a free snapshot is available to every new account. Questions? Reach out below.

You cannot optimize what you cannot see. Start seeing.

See how AI positions your brand

Map your brand on custom perceptual axes, track visibility daily, and get the full picture of how AI models perceive and recommend you versus competitors.

References

- Navigli, R., et al. "Biases in Large Language Models: Origins, Inventory, and Discussion." ACM Journal of Data and Information Quality, 2023. arxiv.org/abs/2305.14552

- Aggarwal, P., et al. "GEO: Generative Engine Optimization." KDD 2024, Princeton/Georgia Tech/IIT Delhi. arxiv.org/abs/2311.09735

- Wan, Y., et al. "Evidence-based evaluation of LLM persuasion." ACL 2024, UC Berkeley. arxiv.org/abs/2407.13008

- Ahrefs. "LLM Brand Visibility Study (75,000 brands)." ahrefs.com

- G2. "Buyer Behavior in 2025." company.g2.com

- Harvard Business Review. "Forget What You Know About SEO: Here's How to Optimize Your Brand for LLMs." June 2025. hbr.org

Get a Demo

Tell us about your brand and we'll be in touch to walk you through Sill.